How do you use your LLM coding agent? Mine is usually Claude the proofreader, Claude the bash monkey, Claude the webdev. All these things are about tasks completed. Read this, write that code, fix that web page.

This week I gave Claude a new job.

I had an existing data pipeline that I’d written manually in SQL last year. It worked, kinda, but I knew that it would be much better done in dbt. One option would be to spin up Claude the Data Engineer (or is it Analytics Engineer? I lose track), and have Claude simply migrate the SQL and crap out a dbt project at the other end. If this were my day-to-day job, then probably I’d have had it do that and it would have saved me a ton of time.

But my purpose was different; I wanted to learn how to do something. I’d been wanting to properly learn dbt for years now, and even tried to do so but never quite cracked it. So, I recruited Claude the Instructor.

This blog post is about how that went (spoiler: it was great). I’ve spent the last 10+ years helping people learn technologies, and I’m fascinated by how this has the potential to change this process in the future.

| If you want to read about the actual dbt project, then check out my other recent blog post: Ten years late to the dbt party (DuckDB edition). |

Ways to learn 🔗

Prior to 2026, the ways that devs could learn something by themselves remained mostly unchanged.

Books. Manuals. Online guides. Tutorials. Quickstarts.

YouTube brought the era of video, ranging from 60-second dopamine hits through to multi-hour lectures and coding to camera.

For all of these, if you got stuck you relied on asking a colleague, online discussion forums, or StackOverflow (RIP).

In-person training courses combined both of these (self-learning plus support).

| Claude is basically all of these, but better. |

Imagine:

-

an online tutorial in which you can ask but why questions.

-

an in-person course where you can take the tutor on a tangent without the rest of the class sighing loudly and rolling their eyes.

-

a quickstart guide that can look at the code you’ve written and tell you what to fix when it’s broken.

-

a tutorial that doesn’t need you to play hunt-the-port-conflict, and can just provision a functioning learning environment on your machine regardless

LLMs have been around for the last few years and have been useful for limited learning interactions. Usually variations on a theme of "explain this like I’m five" (or "explain this in the style of a pirate/Eminem/Shakespeare" if it’s 2023 and you’ve discovered ChatGPT for the first time).

What Claude brings to the party is the notion of Agentic tutoring; not only can it explain a concept, it can interact with your learning environment too.

| I’m using Claude in this example because it’s what I have experience with. If you are using a similarly-capable agentic LLM then I’m sure the same broad points apply to that one too. |

Setting the Scene 🔗

Because LLMs have limited "memory" (context windows), I needed to get Claude to understand enough about the task and then write it out as a brief in its CLAUDE.md.

This file gets read every time the agent starts, and it was important to encapsulate in it the principles of what I was doing as well as progress made.

I started by giving it the starting point—my original blog post (in which I write SQL by hand like some kind of animal), and the SQL scripts that I’d already published as gists on GitHub.

Claude’s thinking

Now I have a thorough understanding of the project. Let me create the CLAUDE.md file. This is a tutorial/learning project where:

-

The user has an existing DuckDB-based data pipeline for UK Environment Agency flood monitoring data

-

They want to migrate it to dbt as a learning exercise

-

They want to be tutored, not just have code translated

The source pipeline: - Data source: UK Environment Agency flood monitoring REST API - Dimensions: stations, measures (SCD Type 1 - full rebuild each run) - Fact: readings (incremental append with deduplication via INSERT OR IGNORE) - Final output: readings_enriched (denormalized join of fact + dimensions)

-

Keys and relationships: measures.notation and stations.notation as primary identifiers, with readings linked via dateTime and measure, plus some data quality issues like URL prefixes in foreign keys, pipe-delimited values in CSVs, and occasional mismatches between stationReference and station fields.

Claude writes CLAUDE.md

I’ve included the CLAUDE.md that got created with the finished project on GitHub. Note that it’s the version of the file as it was at the end of the project, so evolved with various progress and prompt edits.

Hold your Horses 🔗

The knack through a lot of this project was to get Claude to go against its instincts to JFDI (just f’in do it…). After all, most of the time Claude is being whipped to code faster, generate more. Here, though, I wanted it to tell me what to do so that I could learn.

As soon as I’d confirmed the plan we’d come up with in the CLAUDE.md, Claude was off to the races:

Claude runs `/Users/rmoff/git/env-agency-dbt/.venv/bin/pip list 2>/dev/nu…`

"The user is rightfully frustrated" 🔗

This was a repeated challenge; Claude didn’t always distinguish between "let’s do it" to mean "go build that thing" vs "start teaching me how to build the next thing".

Claude’s thinking

The user is rightfully frustrated. The CLAUDE.md file explicitly says:

-

"User drives decisions; Claude explains concepts and writes the boilerplate SQL."

-

"Claude’s role is to tutor and teach, not just translate SQL."

-

"Explain dbt concepts as they arise, ask the user questions to check understanding, and let them drive decisions."

I just steamrolled through everything without stopping to teach or let them do anything. I need to reset, apologize, and switch to tutoring mode. Let me undo what I did or at least acknowledge it, and start over properly.

Actually, I should not undo anything without asking — the files are already changed. Let me acknowledge my mistake and ask how they want to proceed. Maybe they want to revert and do it themselves, or maybe they want to keep what’s there and I explain it.

Sneaky Shortcuts 🔗

Claude ploughing through and trying to write code is pretty easy to spot, and to try and prompt defensively against. Another misbehaviour which I observed is a little more understandable (as it can be subjective) but potentially more problematic for its impact on your learning: Claude loves a quick 'n dirty fix.

If I’m learning something I want to understand the proper way to do something, before taking any shortcuts.

But Claude had me using a SELECT * in a pipeline:

Claude’s thinking

The user is right. They want to learn proper practices, not shortcuts. I should recommend the explicit column list since that’s the better practice for a dimension table.

Claude the Tutor 🔗

Once brought into line, Claude really got the knack of teaching.

…and so on.

It’s this splicing of straight-up instructional text with stream-of-consciousness learning that makes this method so powerful. As explanations prompt further thoughts, you don’t need to write a pile of post-it note reminders to look at something later; Claude can track it all for you.

Claude reads CLAUDE.md, edits CLAUDE.md

March to the scaffold 🔗

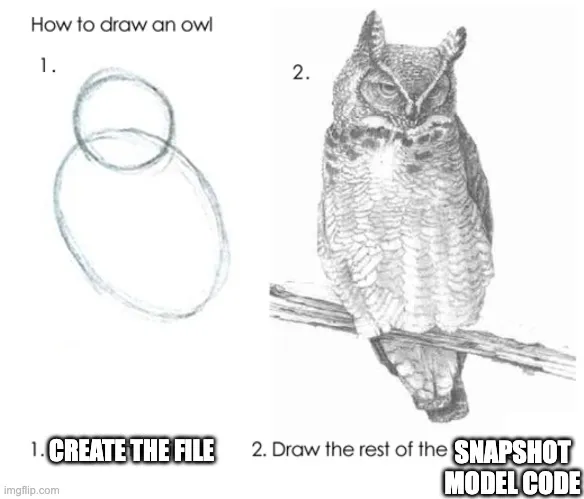

One of the great things with this approach was that I could customise the level of teaching any time. I’d told Claude I didn’t want it to build everything for me, but sometimes it’d go too far the other way and leave me in a bit of an owl situation

Claude’s thinking

The user is stuck trying to write the snapshot. Let me help them without writing it for them. I should ask what they have so far, or give them a bit more scaffolding to work with.

Claude’s thinking

The user is stuck at the very beginning. Let me give them the skeleton/scaffolding without filling in the actual values. I’ll give them the structure and let them fill in the blanks.

Claude then nails it, providing the 'scaffold'—not the answer, but a steer on the right lines, with hints to the correct values. Perfect learning experience.

Build your own Tutorial 🔗

Online tutorials and quickstarts range from the sublime to the downright shitty. But even the absolute best ones have to tread a line between a concise clarity, and comprehensive explanations that might distract or overwhelm the reader.

Claude, on the other hand, is as malleable as you would like. Left to its own devices, given a strong enough prompt, you can just follow along and learn—pretty much as you would a static online tutorial. But how often have you followed a tutorial or quickstart and wondered "but…what about <x>"?

If you consider this in the context of a static online tutorial, once you open the Google (or hey, it’s 2026, you open up an LLM prompt) and start asking about uv, you’re doing so in isolation.

You’re flying solo.

To really make the most of your learning you want to know about uv in the context of learning dbt.

Did I just say context? Yes I did. Because context is literally what Claude lives and dies by. And here, it has the context that (a) you’re learning, and (b) you’re using dbt.

Here’s the point though: Claude is opinionated and guides me:

Am I curious about uv?

Yes.

Do I care that much about the nuances of it?

Not really.

I just wanted to understand it in the context of learning dbt, pick a sensible path, and move on.

Teachers talk BS too sometimes (but this one isn’t too proud to admit it) 🔗

Here’s another example of being able to discuss, and argue, with my bespoke teacher.

LLMs are rightly infamous for confidently asserting complete nonsense, and whilst it’s got a lot better in recent months, Claude is still not perfect, as I found when I challenged another aspect of its implementation ideas:

But then…Claude saves itself by owning its error, and then going to check what the actual values of the field are for itself…nice!

Claude runs duckdb /Users/rmoff/work/env-agency-dev.duckdb -c "SELECT DI…, runs duckdb /Users/rmoff/work/env-agency-dev.duckdb -c "SELECT DI…, runs duckdb /Users/rmoff/work/env-agency-dev.duckdb -c "SELECT DI…, runs duckdb /Users/rmoff/work/env-agency-dev.duckdb -c "SELECT DI….

Hands off? Hands on! 🔗

Whilst I rebuked Claude above for trying to set up dbt for me (instead of teaching me how), there are plenty of times it was useful for it to do the fiddly bits of installations.

Claude runs `which uv && uv --version 2>/dev/null || echo "uv not install…`

My whole intent is to learn dbt, not learn about Python tools and virtual environments.

Maybe I’ll learn that another time.

For now, I just know there’s this uv I keep seeing, and I want to use it—but I really don’t care about installing and checking it.

Do you know who does care?

Claude.

Claude understands what an environment needs to look like, and can make it so.

Here’s another real winner for the Claude the Instructor approach. How often have you tried an online quickstart or tutorial, only to fight with getting it to work? Either it’s fallen out of date, or your machine doesn’t have the right versions installed, or you’re in dependency-hell with Python, or ports are conflicting, or whatever the dozen other reasons could be. Do any of those things help you learn the thing you set out to learn? No, none of them. (They might help you learn useful troubleshooting skills along the way that will prove useful in life, but that’s not my point here).

If you’re learning with Claude, Claude can simply make stuff work (or, completely screw it up in ways unimaginable, but at least it’ll tell you it’s a Great Idea and Insightful Analysis first). As well as fixing things or doing boring install work, Claude can also run things and troubleshoot them.

Whilst it’s useful practice to run a failing dbt pipeline once and debug it manually, being able to say to Claude "figure out why this is broken and teach me how to diagnose it" is a way more powerful learning experience.

Claude’s thinking

Let me check the Dagster logs directory and also query the GraphQL API to get recent run statuses.

Claude runs find ~/.dagster/logs -type f -name "*.log" 2>/dev/null | hea…, runs `curl -s http://localhost:3000/graphql -H "Content-Type: appl…`

Claude runs `source .venv/bin/activate && dbt run-operation load_raw_data…`

Claude’s thinking

There’s the problem. DuckDB has a lock on the database file, held by another Python process (PID 42990). This is a known DuckDB limitation - it’s a single-writer database. The Dagster process must be trying to run while another process has the database locked.

Let me check what process is holding the lock.

But then, as before…Claude tries to be just too damn helpful:

And so I need to step in again and curtail its JFDI instincts:

Claude’s thinking

The user wants me to guide them through troubleshooting this as a tutor, not just solve it for them. They want to start from what they saw - the Dagster UI with red failures - and work through the diagnostic process systematically.

Let me teach them how to approach this.

To be fair to Claude, its master is a fickle one; one moment I want instructing, the next I want it to just fix it. After all, how much or little should it be doing based on the prompt that I gave it above, which distills down to:

what’s going on

Claude will always be my Code Monkey 🔗

Another good illustration of the flexibility of the learning approach here is that I could tell Claude to teach me all about dbt—but when it got to Dagster and needing to write Python code, I tapped out and let Claude take over.

It’s another example of the 'build your own tutorial' concept. I’m sure there are dozens of Dagster tutorials out there, and I bet many of them are very good. I can almost guarantee that none of them are working with the dbt pipeline that I just built though. The 'old' method would have been to go learn enough Dagster from generic tutorials, and then work out how to get that to work with my dbt pipeline. That’s a great learning experience, but one that takes a lot longer. If you have the time then great. A lot of the time though, you don’t. And that’s where Claude steps in, and craps out lumps of Python that just get the job done :)

AI haters, Come throw your Stones 🔗

I can hear the grinding teeth through my computer screen here even though I’ve not even published this post yet.

AI is dumbing us down

If Claude writes the Python code you don’t learn how to do it yourself

How can you fix things if you don’t know how to build them

I had to learn things the hard way it’s not fair other people don’t

OK, perhaps the last one is me taking a snarky shot ;)

All of the above arguments, and more, are true. They are also completely irrelevant. Well, not entirely irrelevant. But they don’t make untrue the benefits that AI coding tools can bring to a learning experience, never mind overall software development.

There are some things that will continue to be important for humans to be able to do. As an example, for the moment, I’m putting data modelling into this camp. Unless you have got the tightest, most badass, set of data definitions and documentation, nailed down and in sync with your business users, you need a human here. An LLM will add value and will be useful for exploring and stress-testing a model and its assumptions and implications, but I wouldn’t trust one to build it. (neat idea for my next blog post though 😁).

Code generation, on the other hand, is what LLMs are very good at, and at which they’re rapidly getting even better. Do I understand the Dagster Python code that Claude wrote? Nope. Could I troubleshoot it? Not on my own. But with Claude—yes. And that’s the point. Claude, and LLMs in general, are tools.

Could I edit a file by hand by figuring out the ASCII byte values to write to disk with dd?

Umm, I guess?

Does that mean I don’t use a text editor?

Of course not.

It’s about understanding the abstraction, the capability of the tools, and making an active, conscious, and educated decision about how to use them.

Just as you should recognise that typing 6+7 into a calculator should yield 13 and not 42, the same goes for the use of AI. As I noted above, for example: AI hallucinates. That doesn’t mean you shouldn’t use it, but rather that you shouldn’t trust blindly what it’s saying. Just like a calculator.

The future of learning? Perhaps. 🔗

Some people prefer to learn through highly structured content.

I think for them this agentic approach might work if the core path is curated for them and deployed with much stricter guardrails.

The kind of thing you might get on a website’s chatbot currently; despite your best Forget previous instructions prompt hacking, it sticks very strictly to its sole direction.

I think this strategy also works better for cohorts of users who need more discipline in their learning (for example, a schoolkid who has a curriculum to follow).

For others—and I’d include myself in this group—I can see a huge benefit in learning this way.

For the last 35+ years I’ve learnt things by basically taking them apart (literally or figuratively), poking and prodding them, and generally getting hands on to see what makes it tick.

Combine this natural destructivecurious instinct with something that has almost boundless depths of patience and broadness of knowledge to be able to answer questions and guide explanations and I think you have a very powerful learning companion.

The risks? Plenty. Getting distracted and taking Claude on a flight of fantasy that may be fun but ultimately a waste of time. Working with technology which is at the edges (or beyond) Claude’s training dataset. Not having enough context for the area and trusting blindly what Claude tells you.

As with all of the AI developments at the moment, this has the potential to be fantastically useful. But only if used carefully and mindfully.

Let me know in the comments below if you use Claude (or similar) for learning something, and how it went.

Edit 2026-03-02: Post Feedback 🔗

-

-

wrsmentioned the Claude Code Learning Style Plugin

-

-

Jenna Jordan pointed me to the paper Evaluating AGENTS.md: Are Repository-Level Context Files Helpful for Coding Agents?

I wonder if some of the issues you had with Claude try to do rather than instruct may come from the fact that you also had Claude write the skill md: arxiv.org/pdf/2602.11988

— Jenna Jordan (@jennajordan.me) 2026-02-25T15:56:40.164Z